# usls

[](https://crates.io/crates/usls) [](https://github.com/microsoft/onnxruntime/releases) [](https://developer.nvidia.com/cuda-toolkit-archive) [](https://developer.nvidia.com/tensorrt)

[](https://docs.rs/usls)

A Rust library integrated with **ONNXRuntime**, providing a collection of **Computer Vison** and **Vision-Language** models including [YOLOv5](https://github.com/ultralytics/yolov5), [YOLOv6](https://github.com/meituan/YOLOv6), [YOLOv7](https://github.com/WongKinYiu/yolov7), [YOLOv8](https://github.com/ultralytics/ultralytics), [YOLOv9](https://github.com/WongKinYiu/yolov9), [YOLOv10](https://github.com/THU-MIG/yolov10), [RTDETR](https://arxiv.org/abs/2304.08069), [SAM](https://github.com/facebookresearch/segment-anything), [MobileSAM](https://github.com/ChaoningZhang/MobileSAM), [EdgeSAM](https://github.com/chongzhou96/EdgeSAM), [SAM-HQ](https://github.com/SysCV/sam-hq), [FastSAM](https://github.com/CASIA-IVA-Lab/FastSAM), [CLIP](https://github.com/openai/CLIP), [BLIP](https://arxiv.org/abs/2201.12086), [DINOv2](https://github.com/facebookresearch/dinov2), [YOLO-World](https://github.com/AILab-CVC/YOLO-World), [PaddleOCR](https://github.com/PaddlePaddle/PaddleOCR), [Depth-Anything](https://github.com/LiheYoung/Depth-Anything) and others.

| Segment Anything |

| :------------------------------------------------------: |

|  |

| YOLO + SAM |

| :------------------------------------------------------: |

|

|

| YOLO + SAM |

| :------------------------------------------------------: |

|  |

| Monocular Depth Estimation |

| :--------------------------------------------------------------: |

|

|

| Monocular Depth Estimation |

| :--------------------------------------------------------------: |

|  |

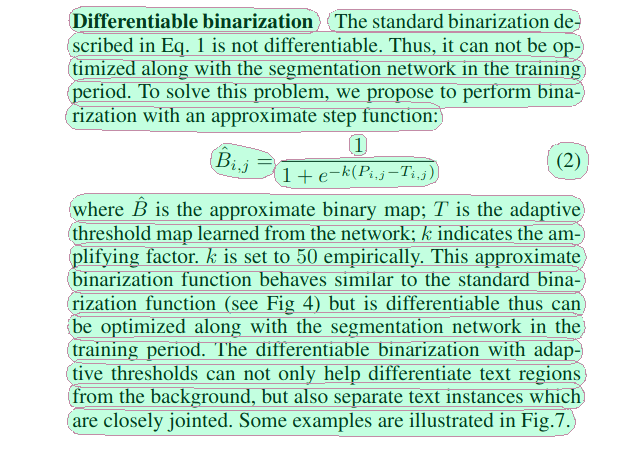

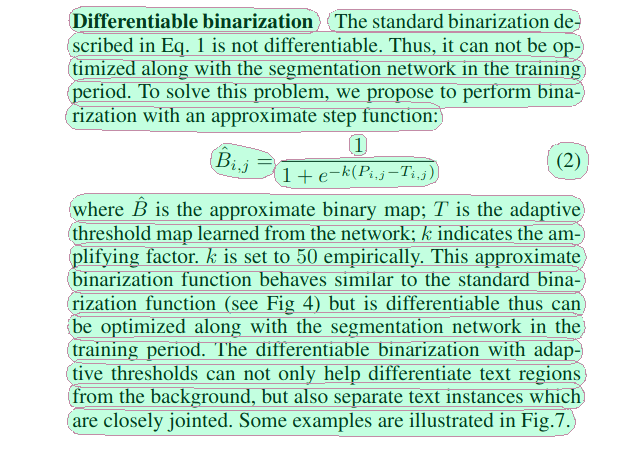

| Panoptic Driving Perception | Text-Detection-Recognition |

| :----------------------------------------------------: | :------------------------------------------------: |

|

|

| Panoptic Driving Perception | Text-Detection-Recognition |

| :----------------------------------------------------: | :------------------------------------------------: |

|  |

|  |

## Supported Models

| Model | Task / Type | Example | CUDA

|

## Supported Models

| Model | Task / Type | Example | CUDA

f32 | CUDA

f16 | TensorRT

f32 | TensorRT

f16 |

| :---------------------------------------------------------------: | :--------------------------------------------------------------------------------------------------------------------: | :--------------------------: | :-----------: | :-----------: | :------------------------: | :-----------------------: |

| [YOLOv5](https://github.com/ultralytics/yolov5) | Classification

Object Detection

Instance Segmentation | [demo](examples/yolo) | ✅ | ✅ | ✅ | ✅ |

| [YOLOv6](https://github.com/meituan/YOLOv6) | Object Detection | [demo](examples/yolo) | ✅ | ✅ | ✅ | ✅ |

| [YOLOv7](https://github.com/WongKinYiu/yolov7) | Object Detection | [demo](examples/yolo) | ✅ | ✅ | ✅ | ✅ |

| [YOLOv8](https://github.com/ultralytics/ultralytics) | Object Detection

Instance Segmentation

Classification

Oriented Object Detection

Keypoint Detection | [demo](examples/yolo) | ✅ | ✅ | ✅ | ✅ |

| [YOLOv9](https://github.com/WongKinYiu/yolov9) | Object Detection | [demo](examples/yolo) | ✅ | ✅ | ✅ | ✅ |

| [YOLOv10](https://github.com/THU-MIG/yolov10) | Object Detection | [demo](examples/yolo) | ✅ | ✅ | ✅ | ✅ |

| [RTDETR](https://arxiv.org/abs/2304.08069) | Object Detection | [demo](examples/yolo) | ✅ | ✅ | ✅ | ✅ |

| [FastSAM](https://github.com/CASIA-IVA-Lab/FastSAM) | Instance Segmentation | [demo](examples/yolo) | ✅ | ✅ | ✅ | ✅ |

| [SAM](https://github.com/facebookresearch/segment-anything) | Segment Anything | [demo](examples/sam) | ✅ | ✅ | | |

| [MobileSAM](https://github.com/ChaoningZhang/MobileSAM) | Segment Anything | [demo](examples/sam) | ✅ | ✅ | | |

| [EdgeSAM](https://github.com/chongzhou96/EdgeSAM) | Segment Anything | [demo](examples/sam) | ✅ | ✅ | | |

| [SAM-HQ](https://github.com/SysCV/sam-hq) | Segment Anything | [demo](examples/sam) | ✅ | ✅ | | |

| [YOLO-World](https://github.com/AILab-CVC/YOLO-World) | Object Detection | [demo](examples/yolo) | ✅ | ✅ | ✅ | ✅ |

| [DINOv2](https://github.com/facebookresearch/dinov2) | Vision-Self-Supervised | [demo](examples/dinov2) | ✅ | ✅ | ✅ | ✅ |

| [CLIP](https://github.com/openai/CLIP) | Vision-Language | [demo](examples/clip) | ✅ | ✅ | ✅ visual

❌ textual | ✅ visual

❌ textual |

| [BLIP](https://github.com/salesforce/BLIP) | Vision-Language | [demo](examples/blip) | ✅ | ✅ | ✅ visual

❌ textual | ✅ visual

❌ textual |

| [DB](https://arxiv.org/abs/1911.08947) | Text Detection | [demo](examples/db) | ✅ | ✅ | ✅ | ✅ |

| [SVTR](https://arxiv.org/abs/2205.00159) | Text Recognition | [demo](examples/svtr) | ✅ | ✅ | ✅ | ✅ |

| [RTMO](https://github.com/open-mmlab/mmpose/tree/main/projects/rtmo) | Keypoint Detection | [demo](examples/rtmo) | ✅ | ✅ | ❌ | ❌ |

| [YOLOPv2](https://arxiv.org/abs/2208.11434) | Panoptic Driving Perception | [demo](examples/yolop) | ✅ | ✅ | ✅ | ✅ |

| [Depth-Anything

(v1, v2)](https://github.com/LiheYoung/Depth-Anything) | Monocular Depth Estimation | [demo](examples/depth-anything) | ✅ | ✅ | ❌ | ❌ |

| [MODNet](https://github.com/ZHKKKe/MODNet) | Image Matting | [demo](examples/modnet) | ✅ | ✅ | ✅ | ✅ |

## Installation

Refer to [ort docs](https://ort.pyke.io/setup/linking)

For Linux or MacOS users

- Download from [ONNXRuntime Releases](https://github.com/microsoft/onnxruntime/releases)

- Then linking

```Shell

export ORT_DYLIB_PATH=/Users/qweasd/Desktop/onnxruntime-osx-arm64-1.17.1/lib/libonnxruntime.1.17.1.dylib

```

## Quick Start

```Shell

cargo run -r --example yolo # blip, clip, yolop, svtr, db, ...

```

## Integrate into your own project

```Shell

# Add `usls` as a dependency to your project's `Cargo.toml`

cargo add usls

# Or you can use specific commit

usls = { git = "https://github.com/jamjamjon/usls", rev = "???sha???"}

```

|

| YOLO + SAM |

| :------------------------------------------------------: |

|

|

| YOLO + SAM |

| :------------------------------------------------------: |

|  |

| Monocular Depth Estimation |

| :--------------------------------------------------------------: |

|

|

| Monocular Depth Estimation |

| :--------------------------------------------------------------: |

|  |

| Panoptic Driving Perception | Text-Detection-Recognition |

| :----------------------------------------------------: | :------------------------------------------------: |

|

|

| Panoptic Driving Perception | Text-Detection-Recognition |

| :----------------------------------------------------: | :------------------------------------------------: |

|  |

|  |

## Supported Models

| Model | Task / Type | Example | CUDA

|

## Supported Models

| Model | Task / Type | Example | CUDA